Table of Contents

Filters for Astrophotography

NOTE : This article has been published in Amateur Astrophotography Magazineissue #75

In astrophotography, as in conventional photography, what we do is to capture light at a certain moment and record it with the help of our photographic equipment. Formerly using different chemicals, films and papers, and nowadays, using high resolution silicon sensors with great light gathering capacity.

To talk about filters in astrophotography properly it is necessary that we first talk about light, since by understanding its principles we can better understand how filters work.

The light

Light, as we understand it nowadays, is a Wave-Particle combination (it is somewhat complex to explain it here and my knowledge of quantum physics is not as good as I would want, but to fix that, we have the wikipedia). It is made up of "particles" called photons that behave like an electromagnetic wave that moves at the speed of light.

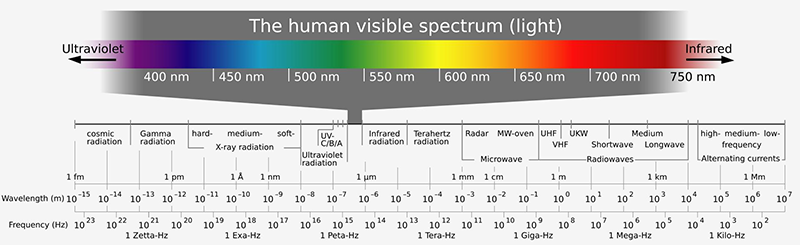

Visible spectrum

Depending on the electromagnetic wave frequency of a light beam, we can locate it among the electromagnetic spectrum. Only a small portion of the electromagnetic spectrum is visible to humans, defined between wavelengths from 380nm to 780nm and called the Visible Spectrum.

The rest of the electromagnetic spectrum

However, there are multiple frequencies of the electromagnetic spectrum that are not visible to humans, although many of us use them almost daily.

The wavelengths under 380nm belong to the ultraviolet (as its name indicates are those that are beyond violet). In this part of the spectrum are the UV rays (which we receive in small amounts from our sun attenuated by the ozone layer), x-rays (used in medical radiologic diagnostic techniques) and gamma rays (cosmic explosions emit radiation in this part of the spectrum). All of them are high energy rays capable of ionizing matter and breaking chemical bonds, making them dangerous for life.

Wavelengths greater than 780nm are known as infrared. Here are the infrared waves (heat radiation from our body is emitted largely at this frequency), microwaves (which almost all of us use to heat food quickly) and radio waves (mobile in all its bands, WiFi , radio frequency to open the garage, the radio that we listen to in the car and many other examples).

Knowing how the filters work

Now that we know how light behaves we can imagine how a filter works. Basically, when light passes through a filter, certain wavelengths are attenuated or blocked while letting others pass.

This is normally achieved by coating the filter glasses with different chemical elements that block unwanted wavelengths.

The image shows that the filter glass has a coating that reflects light differently than normal uncoated glass.

Showing how a filter works

Some time ago I posted a tweet showing how a svbony light pollution filter that I had purchased from Aliexpress a few weeks earlier worked.

Tweet svbony filter

On the left side of the photo you can see the spectrum of a mercury vapor fluorescent lamp that I have obtained using a homemade spectrograph. On the right side, the spectrum of the same lamp is seen when placing the filter in front of the light input of the spectrograph.

In the image on the right we can see that the filter has blocked some visible wavelengths while allowing others to pass.

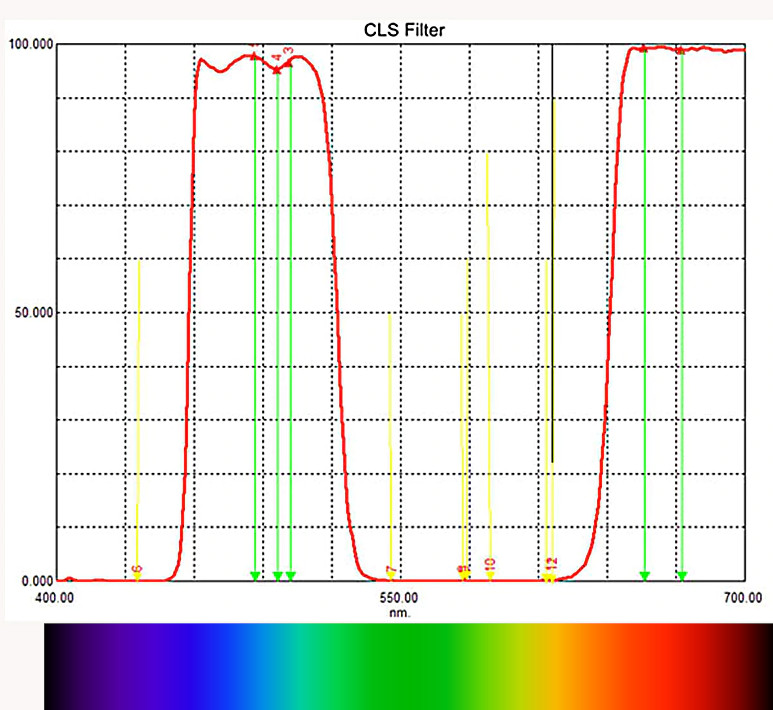

The graph of this filter's specifications is the following:

CLS svbony filter graph

As we can see, the graph indicates that all wavelengths are blocked except for the red and blue zones of the visible spectrum. If we compare it with the previous image we can see in the image on the right that the only wavelengths that it has allowed to pass are precisely the blue and the red ones.

Why would we want to use filters in astrography?

Filters are very useful in digital photography and especially in astrophotography. In scientific astrophotography, it is required to isolate certain wavelengths to make specific studies, or eliminate signals that interfere with the signals that are really wanted to study.

In amateur astrophotography, the most common problem solved by filters is to keep light pollution away from our images, although they are also often used to isolate certain wavelengths to process them separately in images, as is the case with hydrogen-alpha (H-alpha) emissions.

Infrared radiation is also especially "harmful" to our images. The stars emit infrared radiation that causes to capture them much thicker than what we can see with the naked eye. This effect is commonly called "Star Bloating" and can be attenuated by using filters that block infrared radiation.

Filters on digital cameras

If you have used a compact digital camera, a DSLR camera or a smartphone camera, you have used filters and most probably, without knowing it.

Digital cameras use silicon sensors with millions of tiny photon detectors. When we take a photo and open the shutter, what the sensor does is to count the number of photons that reach it in each detector and add them to its internal memory.

By closing the shutter and completing the capture, the camera translates those photon counts into values in a matrix that build up the final image we obtain. There are many more steps in the process and multiple elements and factors that I have not introduced to facilitate understanding of the concept.

Monochrome cameras only capture the intensity of light at each pixel without obtaining information about the wavelength of the photons they detect. This means that they discard part of the image information, so they cannot obtain color information by themselves.

However, by using filters we can obtain the color information easily.

Color in digital photography

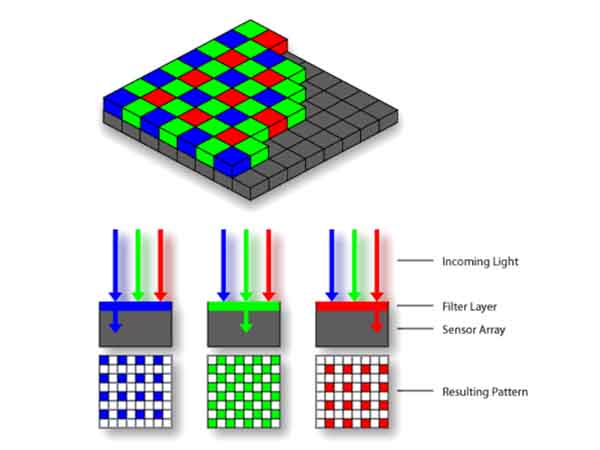

In digital photography the additive property of colors is used so that using only three colors we can obtain all the other. These colors are the primary colors in the light additive system, and are red, green, and blue, which are usually called RGB.

This principle is the one used in color televisions, projectors, smartphone screens, and of course in the digital camera sensors.

Color cameras, or OSC (One Shot Color) cameras and DSLR cameras already include a way to capture color. The camera's sensor is covered by microscopic RGB filters that form a pattern over the sensor light collectors. This pattern is called Bayer Matrix or Bayer Mosaic, invented by Bryce Bayer, working at Kodak.

Bayer Matrix

The principle of operation of this matrix is that each pixel obtains a value for a color of the pattern, and then, using an interpolation algorithm, determines what color a specific pixel should be based on the values of itself and adjacent pixels.

We could say that a color camera is a filtered camera, and because of this, they are usually less sensitive than black and white cameras.

In addition, color cameras have a filter that prevents infrared radiation from being detected by the sensor. The problem with this filter is that it filters out much of the red-infrared and near-infrared signal from the image, which emits many objects from the sky. That is why it is very common for astrophotographers to "astro-convert" their camera to remove that filter and replace it with a more suitable one or just remove it completely.

Types of astronomical filters

Although there are a variety of astronomical filters, I will only name the most used filters in amateur astronomy and their uses. There are many of them suitable for all kinds of applications.

Filters used in monochrome cameras

Luminance Filter : Filter used to obtain the highest possible monochrome signal on the photographed object. It is actually a filter that only lets the visible spectrum pass and blocks Infrared and Ultraviolet.

RGB Filters : These are three filters that allow you to pass only the Red, Green and Blue frequencies independently. The combined use of these filters together with the signal obtained with a luminance filter allows to obtain color images with monochrome cameras.

RGB filters do not make sense for a color camera since it includes them in the Bayer matrix and filtering a color twice will make us lose signal. However, the luminance filter prevents star bloating by blocking infrared radiation, and is also used in color cameras that do not include an infrared filter.

Narrowband filters

Narrowband filters, as the name suggests, allow only a small part of the spectrum to pass through. Many of the deep sky objects emit in a series of wavelengths of interest to astronomers, therefore specific filters have been created for those frequencies to isolate these signals and process them separately. The most common are:

H-Alpha filter :

The H-Alpha filter blocks all emissions except the areas near the spectrum at 656.28nm. This particular frequency is emitted by the ionized hydrogen content of the gas clouds in space. It is also widely used in solar astronomy in conjunction with an infrared filter to prevent infrared rays from deteriorating the coating of the H-Alpha filter.

OIII Filter :

The OIII or Double Ionized Oxygen filter allows only the wavelengths between 500.7nm and 495.9nm, that correspond to the turquoise and cyan colors. They are very useful for observing planetary and diffuse nebulae in which there are high concentrations of OIII.

SII Filter :

Ionized Sulfur filters allow light to pass between 671.7nm and 673nm emitted by some nebulae.

These three filters together allow to process the images with the so-called Hubble Palette, leaving the images with an aspect similar to that of the photos of the space telescope.

Detail photo of the Carina Nebula by NASA, ESA, and the Hubble SM4 ERO team

These filters can be used in both monochrome and color cameras as they isolate very narrow wavelengths.

Filters for use in color cameras

IR/UV Filters :

In essence it is the same luminance filter that is used in monochrome cameras to prevent star bloating in the image.

Dual, Triple, Narrowband Filters ... :

Dual, Triple, and other narrowband filters are useful for color cameras because the combination of narrowbands can be captured in one shot. without having to do each one separately.

Light pollution filters

CLS :

CLS or "City Light Supression" filters are filters that partially block the wavelengths of light emissions from the most common lights in cities such as sodium vapor lamps. Today there are CLS filters that block some of the emission from LED lights and other sources of unwanted lighting.

CLS-CCD :

This type of filter is specific for cameras that do not already have IR/UV filters. This filter combines the ability to filter infrared and ultraviolet along with the ability to block the same frequencies as a normal CLS filter.

Narrowband Filters :

Although narrowband filters are not "per-se" light pollution filters, they block the vast majority of the spectrum and therefore can be used to capture images at highly light polluted locations.

Conclusions

There are many filters used in astronomy (especially planetary) for specific applications that I have not included in the article due to lack of knowledge since I normally focus on deep sky astrophotography. Maybe in the future I could write more about specific filters as I learn more about them and can try them out.

I hope this article has shed a little light (ba-dum-tss) on the subject of filters so we can better understand which filters interest us according to the use we want to give them.

Avenue 17

What charming answer